Where AI Belongs (And Where It Doesn't)

The disclosure question is settled. This is the production question: where does AI help, where does it hurt, and how do you tell the difference before something ships with extra legs.

TL;DR

A Google Cloud-commissioned Harris Poll of 615 developers found that 90% are using AI in their workflows. GDC's larger, vendor-neutral State of the Industry survey of over 2,300 developers suggests only around a third personally use AI tools, and more than half now think generative AI is bad for the industry.

The mistake most studios make is not using too much AI. It is using it in the wrong places, usually because nobody stopped to ask what the work was actually for.

This piece maps the full pipeline into three categories: work you should automate without guilt, work that benefits from AI but requires human direction, and work you need to protect regardless of the cost savings.

The studios getting this right did not start with the tool list. They started with the question of where human judgment was load-bearing, and found the tools that handled everything else.

Generative AI for final art assets is still a war. The studios that have drawn a clear line are the ones not getting caught. The ones that haven't are one launch away from a controversy they cannot cat their way out of.

This is the third piece in a series on AI and the games industry.

The Revenge of Pencilslop started it: why audiences are actively training themselves to detect AI output, and what that movement means for studios that have been treating disclosure as optional.

The AI Cat Test followed: what three AI controversies in six months revealed about community trust, why the middle tier of studios is most exposed, and what the apology template cannot fix.

This piece answers the prior question both of those pieces assumed: where should AI go in the pipeline in the first place?

A founder I work with called me a few weeks before his Early Access launch.

Strong systems core. Solid production pipeline. He had just discovered he could generate his entire optional narrative layer with AI in 48 hours.

The question was simple: should we?

I asked whether players were engaging with the narrative layer he already had.

Long pause. Not really.

“So the question,” I said, “is not whether AI can generate it faster. The question is whether anyone was reading it in the first place.”

He went quiet.

Then: “That’s a different problem, isn’t it.”

It is. And it is the problem most studios reach for AI to solve before they have diagnosed what the actual problem is.

Automate Freely. Augment Carefully. Protect Fiercely.

There is a single question that organises the whole pipeline:

Does human judgment here create irreplaceable value, or is this skilled labour applied to mechanical repetition?

Every production phase falls somewhere on that axis. Work that is mechanical repetition belongs in the first category. Work that benefits from AI but requires a human creative direction belongs in the second. Work where the human judgment is the entire point belongs in the third.

Automate Freely

These are the phases where the honest answer is: nobody loved doing this work.

Rotoscoping is frame-by-frame isolation of characters from video backgrounds. Precision-intensive, creatively null, capable of stretching across weeks on a single scene. Historically one of the most gruelling jobs in the entire pipeline. AI-assisted tools like Adobe After Effects Roto Brush 2 and Boris FX Silhouette now propagate matte masks across clip duration automatically. What a team of ten once took weeks to complete, two artists reviewing AI output can finish in hours. The creative work is identical. The endurance test is gone.

Rigging is the same story. A mid-complexity 3D character took 12 to 20 hours to rig manually. Good riggers have always been rare and overbooked. Tools like Adobe Mixamo, AccuRIG, and AutoRig Pro compress that timeline dramatically for humanoid and biped characters. Complex creature rigs still need manual oversight. But the floor was the problem. It was strangling production.

QA playtesting belongs here too. AI-driven agents run millions of gameplay scenarios autonomously. Exploring levels, finding stuck spots, logging bugs. EA used comparable approaches for FIFA, covering scenarios no human testing team could address comprehensively. Running the same QA scenario for the four hundredth time to surface a memory leak is not a creative loss. That is the right tool finding its right job.

Localisation, NPC bark libraries, code completion: all the same category. Ubisoft’s Ghostwriter generates first drafts of ambient NPC dialogue that writers select, edit, and approve. The writers still make every meaningful decision. They just make fewer trips through the same territory.

Automate this work without guilt. Protecting mechanical repetition as if it were craft is not protecting craft. It is protecting the wrapper around craft.

Augment Carefully

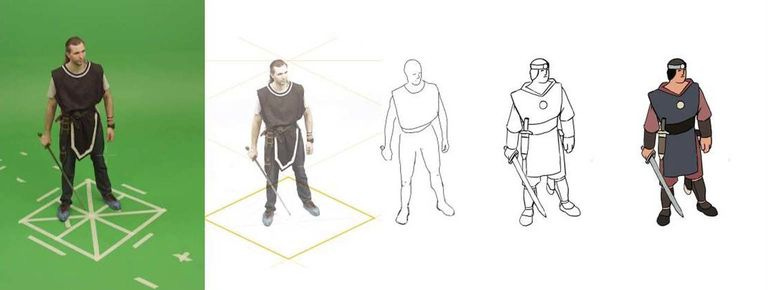

Source: Dragon Snacks Games (the above is not Generative AI)

This is the category where AI genuinely accelerates human creative direction, but where the human direction has to be there first.

Concept art generation is the clearest example, and the most misunderstood. Tools like Midjourney, Stable Diffusion, and Adobe Firefly have a legitimate role in the phase before pre-production: building a business case. Visualising a creative direction before committing budget to it, showing stakeholders what a world could look like, getting a greenlight. That is it.

And even there, the limitation is real. Generative AI and LLMs produce the mean. The statistically central version of whatever you asked for. For a business case, that can be enough to get the room to say yes. But it is also the ceiling. You will never get a flash of surprise from it. You will never get something unmistakably from a specific person.

I have been watching two collaborators make something that could only have come from them.

I have been working with the Dragon Snacks Games team for the past year. The work their art director Stephen Langmead has done on it has been mindblowing: beautiful, with a flash of Vess and Madureira that is very... Stephen Langmead.

Paul Murphy‘s prose operates at a completely different level. Neither of these is the mid or the median. It is true human craftsmanship at its peak, the kind that can only be attained from years of practice and learning.

Here is why. Stephen’s work carries the accumulated weight of everything he has ever seen, felt, and noticed. A walk in the park. An insect on the lawn that morning. The specific light at a specific hour.

Paul’s writing carries the same thing: the gardening, or the grievance that I ate his lunch fuelling him that afternoon. All of it feeds into the work in ways neither of them could fully explain. That diffuse thinking, that life lived between the hours at the desk, is not decoration. It is the source material.

LLMs are super librarians. They have read everything. They can tell you where the book is. But very few librarians can design a bridge with human ennui and caprice, because they did not build the last one. AI has no walk in the park. It has no insect on the lawn. It has only what it was fed, and what it was fed was other people’s finished work, stripped of everything that made it surprising in the first place.

Nothing generates that. Nothing approximates it. That work exists because specific people with specific histories made specific decisions, and every one of them is visible in the result.

Once pre-production begins, this is the artists’ domain. Where they use AI tools in their own process is their decision. Generative AI should not be directed at them as a production requirement, and it should never be used to produce final character, creature, or world identity art assets. The controversy around AI in game art is real, it is not going away, and the studios treating it as settled are the ones getting caught with horses that have too many legs.

The one exception everyone agrees on: placeholder art. Deliberately rough standins used internally during development, tagged as AI, never shipped. That is the legitimate use case. Everything else is contested ground.

The AI does not have a vision of what the game should feel like; it has a statistical mean of everything it was trained on.

When you use it well: you are building a business case before pre-production begins, then handing a clear creative direction to the humans who will actually build it.

When you use it badly: you are replacing the artists who would have made something worth playing.

The same principle runs across the rest of the category:

Texture and 3D assets: Adobe Substance 3D Firefly integration, Tripo AI, Meshy. Powerful tools that compress pipelines commercially, particularly for indie developers. But they amplify a creative direction rather than originate one. A studio with a clear brief and a trained custom model built on their existing visual language will get dramatically better results than one treating these as a blank slate.

Adaptive music: Soundraw, Beatoven.ai. Royalty-free tracks that respond to gameplay state. The composition is AI. The creative brief is yours.

AI voice: ElevenLabs, Respeecher. Legitimate for placeholder audio, ambient NPC voices, and localisation acceleration. Not for hero characters or anything carrying emotional weight. Beth Park, the lead performance director on Black Myth: Wukong, said it around the time of her BAFTA Breakthrough 2024 nomination: AI can mimic tone. It cannot capture the feeling behind it.

Protect Fiercely

The third category is the one where cost savings are a trap.

There is a specific texture to work made by someone who genuinely cared. An unexpected choice in the dialogue. A piece of music that resolves somewhere you did not expect. A character design where something specific is happening in the eyes and you cannot immediately say why it works. None of that is accidental. None of it can be generated.

A 2025 study in Scientific Reports confirmed it: the top 10% of human creators still significantly outperform even the strongest AI models in storytelling and narrative design. What AI produces at its ceiling is the mean. Statistically central. Sometimes useful. But structurally incapable of surprise, because surprise requires a perspective, and a perspective requires a life.

The cats in Crimson Desert are the simplest illustration. When Crimson Desert launched in March 2026, players discovered protagonist Kliff could carry a cat on his shoulder through the entire game. Through shops. Through arm-wrestling matches. The same day, Pearl Abyss had shipped undisclosed AI-generated paintings with horses carrying extra legs. Both stories went viral simultaneously. The horse was evidence nobody checked. The cat was evidence someone cared.

Players feel the difference between a world someone loved building and a world someone filed as a deliverable. They cannot always articulate it. They feel it. Find that layer in your pipeline and do not let it become a cost optimisation exercise.

The Practical Map

The three categories cover the full production pipeline as follows:

Automate Freely:

Rotoscoping

Rigging for standard biped characters

QA playtesting

NPC bark generation

Localisation first drafts

Code completion for repetitive implementation

Ambient voice generation

Environmental prop placement

Augment Carefully:

Concept art ideation

Texture and 3D asset generation (with human direction)

Adaptive music

Narrative brainstorming and structure

Voice placeholder for non-hero characters

World-building lore consistency checking

Protect Fiercely:

Hero character performance

Authorial narrative and the specific decisions that give a game its personality

Creative direction

The human judgment that decides whether a thing is worth making at all

The studios navigating this well share one characteristic. They did not start with the tool list. They started with the question of where human judgment was load-bearing in their pipeline, and found the tools that handled everything else. The tool list is a downstream decision. The map is the work.

The Reframe

The digital art transition of the 1990s did not destroy illustration. It destroyed the demand for people doing mechanically repetitive illustration tasks, while expanding the total creative economy and making it genuinely global. The same logic is running now, and the same confusion is happening: studios conflating the automation of mechanical work with the automation of creative vision, as if they were the same thing.

They are not the same thing. They have never been the same thing. The error in the 1990s was the traditional illustrators who refused to touch digital tools and were left behind. The error now is the studios touching every part of their pipeline with AI because 90% sounds like a mandate, without asking where the value they are actually selling to players comes from.

The tools are genuinely good. More of them are genuinely good than the anxious side of the conversation acknowledges, and the time savings in the right places are real and significant. A two-person team can now build what previously required twenty, in the categories where mechanical repetition was the constraint.

But the map still matters. A team of two using AI to accelerate a genuine creative vision will ship something worth playing. A team of twenty using AI to skip the creative vision question will ship faster into mediocrity. The volume of games is increasing. The signal-to-noise ratio is not. The pipeline map is how you stay on the right side of that ratio.

Abbas Saleem Khan is a Principal Consultant at Llama & Griffin, advising game studios, streaming platforms, and investment funds across six continents. He writes The Pattern Recognition: gaming industry intelligence 12 to 24 months before it becomes consensus.